The 1stHoloMatic Future Day, with the theme of ‘A Robotic State of Mind’, was held in Wuhan recently. Renowned scholars and industrial leaders in the field of robotics from across the world were invited to introduce cutting-edge theories, research and application of mobile robotics to the academia in Wuhan and explored how to address the challenges in the industrialization of mobile robotics. HoloMatic Future Day is an event periodically held by HoloMatic, a pioneer in series production-ready autonomous driving solution. The event aims to promote conversation and collaboration between academia and industry.

The event was joined by renowned scholars and experts in the industry, including: Qionghai Dai, academician of the Chinese Academy of Engineering, professor of the Department of Automation and visiting professor of the School of Life Sciences at Tsinghua University; Frank Dellaert, full professor at the Georgia Institute of Technology and Chief 3D Vision Scientist at HoloMatic; Shaojie Shen, assistant professor of the Department of Electronic and Computer Engineering at Hong Kong University of Science and Technology (HKUST); Ayoung Kim, assistant professor of the Department of Civil and Environmental Engineering at Korea Advanced Institute of Science and Technology (KAIST); Laurent Kneip, assistant professor and research fellow of the School of Information Science and Technology at ShanghaiTech University; Guofeng Zhang, professor at Zhejiang University and awardee of Outstanding Youth Science Foundation; Huaping Liu, special research fellow of the Department of Computer Science and CSAI at Tsinghua University; Mingyang Li, R&D Director at Alibaba AI Labs; and Xin Zhao, Business Line Manager at TÜV SÜD Certification and Testing (China) Corporation of TÜV SÜD Greater China.

Experts provided their insight into the latest trend of mobile robotics in their keynote speeches

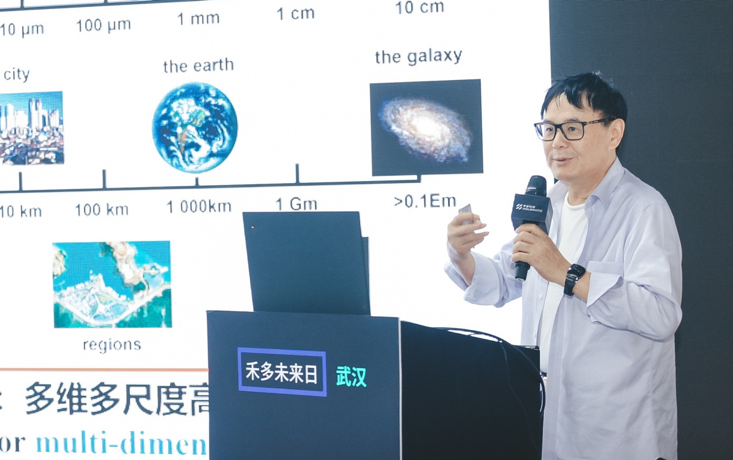

Qionghai Dai, academician of the Chinese Academy of Engineering, professor of the Department of Automation and visiting professor of the School of Life Sciences at Tsinghua University

Qionghai Dai, academician of the Chinese Academy of Engineering, professor of the Department of Automation and visiting professor of the School of Life Sciences at Tsinghua University, gave a keynote speech entitled “The New Direction of AI -- Light Field Vision”. Most of the vision and imaging units used in AI systems are monocular or binocular, incapable of multi-dimensional imaging like human eyes. The limitations of these units in time, space, angle, spectrum and dynamic range have hindered the advance of research and application of mobile robotics in lots of use cases such as L5 autonomous driving. Academician Dai has specialized in computational photography for many years. The 3rd generation unstructured multi-scale camera array, researched by Academician Dai, is capable of centralized distributed calibration that addresses the conflict between depth of field and spatial resolution, and has the capability of 360-degree, long-range, high-resolution and dynamic depth computing. At the same time, Academician Dai and his team introduced the 4th generation vision system based on photoelectric computing that computes optical signals by capturing diffraction and interference in the process of signal transmission. Once the system is applied to autonomous driving, the amount of power used by the autonomous driving system in computing in various use cases would be optimized.

Frank Dellaert, Full Professor at the Georgia Institute of Technology and Chief 3D Vision Scientist at HoloMatic

Frank Dellaert, full professor at the Georgia Institute of Technology and Chief 3D Vision Scientist at HoloMatic, gave a speech entitled “Widespread Application of Inference Based on Factor Graph and Automatic Differentiation in Robotics and Computer Vision”. In robotics and computer vision, Simultaneous Localization and Mapping (SLAM) and Structure from Motion (SFM) are two crucial techniques closely related to each other. In the speech, Professor Dellaert reviewed the application of factor graph in SLAM, SFM, robotics and vision, and demonstrated the batching and incremental processing algorithms for factor graph as well as its advantages in processing complex trajectories.

Shaojie Shen, Assistant Professor of the Department of Electronic and Computer Engineering at HKUST

Shaojie Shen, assistant professor of the Department of Electronic and Computer Engineering at HKUST, gave a keynote entitled “3D Visual Perception in Complex Environments -- 3D Reconstruction, State Estimation and Perception of Dynamic Objects”, which detailed how his team has been striving to make low-cost and small-sized drones operational in complex environments. In addition, they are also researching other topics including pose estimation, detection and tracking of dynamic objects, vehicle motion prediction and route planning.

Ayoung Kim, Assistant Professor of the Department of Civil and Environmental Engineering at KAIST

Ayoung Kim, assistant professor of the Department of Civil and Environmental Engineering at KAIST, gave a speech entitled “Perception-based SLAM in Urban Environment”. Professor Kim and her team researched into SLAM algorithms based on lidar and camera, with the aim to enable autonomous vehicles to drive safely in complex urban environments. Using 2D and 3D lidars, they carried out measurement, built maps and generated complex data related to urban maps. Furthermore, they are also doing researching on how to manually calibrate objects in a better way and multimodal SLAM based on camera lidar and thermal lidar.

Laurent Kneip, Assistant Professor and Research Fellow of the School of Information Science and Technology at ShanghaiTech University

Laurent Kneip, assistant professor and researcher of the School of Information Science and Technology at ShanghaiTech University, gave a keynote entitled “Spatial AI: A Higher-level SLAM with Embedded Expression”. He believes that Spatial AI is a higher-level way of expression that combines geometric information, semantic information and objects to understand scenarios. It does not reconstruct environment but reconstructs objects using semantic information (for instance, prior shape). Therefore, it is a data-driven SLAM architecture in real sense.

Guofeng Zhang, Professor at Zhejiang University and Awardee of Outstanding Youth Science Foundation

Guofeng Zhang, professor at Zhejiang University and awardee of Outstanding Youth Science Foundation, gave a speech entitled “Vision SLAM Technology and Its Application in AR”. Professor Zhang believes that SLAM based on a single sensor has lots of limitations, so multi-sensor fusion is an irresistible trend, just as the smartphones we are using today are equipped with several sensors including camera and IMU. Mobile robots can have more sensors. For instance, an autonomous driving system typically uses camera, LiDAR, GPS, IMU and odometer. As each type of sensor has its own advantages and disadvantages, there would be lots of limitations if only one type of sensor is used. By fusing a set of sensors through SLAM, we are able to achieve reliable high-precision localization. Currently, Professor Zhang’s team is focusing on the application of SLAM in AR and VR and intends to do more research on robotics and autonomous driving next.

Huaping Liu, Special Research Fellow of the Department of Computer Science and CSAI at Tsinghua University

Huaping Liu, special research fellow of the Department of Computer Science and CSAI at Tsinghua University, gave a speech entitled “Deep Reinforcement Learning for Multi-modal Active Environmental Perception”. The main focus of Liu’s research is applied robot and operational robot. In the real-world scenarios, robots perceive the world mainly through three senses -- vision, hearing and touch. But the fundamental challenge for robots to fuse multi-modal information is that visual, auditory and tactile senses have different forms and these forms of information do not match each other. The main problem that Liu is solving is how to fuse the isomeric multi-modal data and how to integrate perception and action in a highly non-linear way after introducing active perception.

Advance the mass application of mobile robotics through collaboration among industry, academia and research

In the end, a panel discussion on “Challenges in the Industrialization of Mobile Robotics” was conducted. The participants included Guofeng Zhang, professor at Zhejiang University; Frank Dellaert, full professor at the Georgia Institute of Technology; Mingyang Li, R&D Director at Alibaba AI Labs; Kai Ni, founder and CEO of HoloMatic; Xin Zhao, TÜV SÜD Certification and Testing (China) Corporation of TÜV SÜD Greater China.

Panel Discussion on “Challenges in the Industrialization of Mobile Robotics”

Mingyang Li said that, given the current technological progress and level of industrialization of mobile robots, industrial robots could only perform a single task at present and the next step should be to integrate different technologies to make robots multi-functional.

However, Professor Frank Dellaert believed that the maturity standards of mobile robotics varied with different products and use cases. For instance, if the task of drone is to follow the movements of users, the current technology is mature for the use case; but if our aim is to make autonomous driving safe, reliable and attractive to consumers, the standards of technological maturity would be very high.

Then, Kai Ni pointed out the issue of fragmentation of software and hardware. Fragmentation of software and hardware led to the fragmentation of algorithms in various vertical areas. Each robot manufacturer has developed its own actuators, resulting in its own unique SLAM, perception and decision-making systems.

Xin Zhao believed that there were four preconditions for industrialization -- needs, business model, industrial policy and standards. Traditional industrial robots are mainly about mechanics, but mobile robotics are more about software, so software quality and software security, among other factors, should be considered in mobile robotics.

As an interdisciplinary field, mobile robotics includes the fields of computer science, mechanical engineering and electronic engineering, integrates software and hardware, and cuts across the boundaries of industry, academia and research. Professor Guofeng Zhang stated that it would be difficult for a research team at a university to develop a marketable product directly, so the team has to collaborate with enterprises to facilitate the mass application of new technologies. The integration among industry, academia and research means that each side should fully exploit its own strength -- colleges and research institutes excel at theoretical and exploratory research whereas enterprises have a better understanding of the needs in application and are adept at engineering development. The close collaboration among them would create synergy and facilitate the application of technologies.

HoloMatic, the host of the event, was created in June 2017. Based on cutting-edge artificial intelligence and automotive technologies, the company has full-stack R&D capabilities from wire control, multi-sensor fusion to core algorithm design. HoloMatic aims to promote the mass application of autonomous driving with a focus on two major use cases -- highway pilot and valet parking and strives to provide series production-ready autonomous driving solutions driven by local data. In the beginning of this year, HoloMatic set up a research institute in Wuhan which has entered into collaboration with several universities in the region including Wuhan University to conduct exploratory research on topics related to autonomous driving.